Automate Compliance Reporting

- hassaansaleh

- Sep 14, 2023

- 4 min read

Updated: Dec 5, 2023

In today's ever-changing business landscape, compliance has become an integral part of the operations taking place in an organization. As businesses enter the digital era and start leveraging data for business advantage, they shoulder the responsibility of keeping business and customer information safe.

Furthermore, being complaint helps the organization to operate ethically within the legal boundaries while minimizing risk. Organizations have made great progress in automating their data analytics processes. When it comes to managing compliance data, they lag significantly. In the era of unprecedented data volumes and strict regulatory compliance, harnessing the power of data analytics is a necessity.

In this blog, we will discuss how we helped a customer to automate compliance reporting from a completely manual process. The healthcare vertical customer must follow stringent regulations. This article is limited to handling SOX compliance requirements. As part of the scope, around 80 Oracle and 100 SQL Server databases were to be analyzed for any profile and user level violations.

Background

Before the process to automate compliance reporting, the team was manually extracting the password and profile information from each database in an excel file. Each file contained around six thousand records. Prior to analyzing this information, some transformations were applied to the data. This process took weeks to complete for all databases. Furthermore, due to the manual nature of work, the error rate was high. There was also no mechanism to perform data quality checks, which is of paramount importance when dealing with compliance data. Another challenge faced was maintaining file versions as multiple people were working on the same file. Finally, refreshing data or adding new information into existing files became a lengthy process since the same operation from start to end had to be repeated.

A project to replace the Excel based manual process with an automated end to end analytics solution was begun. In the first phase, 50 oracle databases were selected based on their criticality and overall delivery time. The project involved extracting raw excel data files from the databases into blob storage and applying transformations. The data is then moved to tables in a data warehouse, which are then exposed to end users via dashboard to help automate compliance reporting. The project was divided into two workstreams: ETL and Reporting.

ETL Process

The first step in building the data pipeline was to choose the right ETL tool for the job. The team made a comparison among different tools available in the market, based on factors such as cost, maintenance, integration and support. After much deliberation, ADF (Azure Data Factory) was selected as the primary ETL tool due to its reasonable cost and strong integration with other data sources that the customer was using. For the data warehouse, Snowflake was selected since it was already being used as a primary data warehouse across the organization. Once the tools were finalized, the work started on building the data pipeline. The first step involved moving the excel files extracted from a SharePoint portal into Azure Blob storage. The COPY activity in ADF was used for this purpose. The second step was to move the files from Blob storage to Snowflake table after performing several transformations.

Below is the list of transformations performed using Mapping Data Flow and Power Query:

Appending data from multiple files into one table

Removing columns that are not required

Replacing incorrect values with correct values in multiple columns

Creating customized columns based on business rules

Once the transformations were completed, the next step was to move the data to a table in Snowflake which is already created. This was done using the COPY task in ADF by defining Snowflake as a sink (Target).

Data Quality

Apart from using data consistency verification provided by ADF, data validation was also performed by comparing row counts between Snowflake and source file. Some other validations such as data type, null value check and length check were also created in ADF to maintain data quality. The quality control mechanism was integrated with the data pipeline, so it always runs when new data is loaded.

Analytics

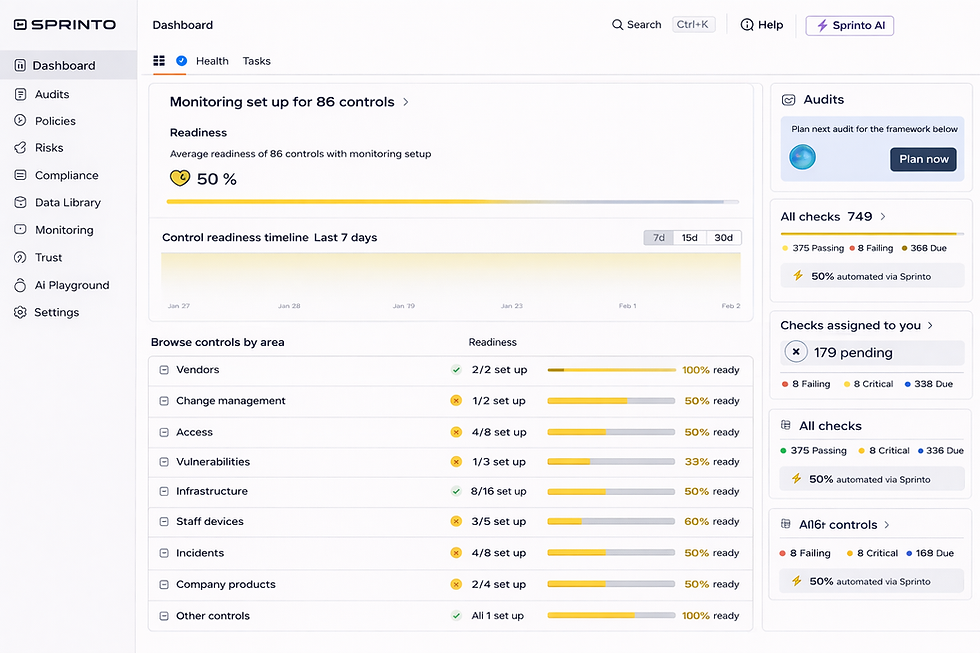

The second part of this project was to build interactive analytics for the users to analyze compliance data. For this purpose, Power BI was chosen as the preferred tool mainly because it was already being used in the organization. Leveraging Power BI's diverse set of features, powerful visualizations were designed that refined the tabular format compliance data into interactive charts, graphs, and KPIs. These visualizations not only provide a real-time alert for compliance violations but also allow for a further deep dive into specific areas of interest. By using filters, slicers, and drill-down capabilities, users were able to tailor their exploration, uncovering insights that drive informed decision-making.

Achievements

The new ETL process removed the manual work and reduced the time to deliver latest compliance data from 1 day to 4 hours

It enabled the users to report on the latest compliance data

The dashboard reduced the time taken to manually analyze the data in excel from 4 hours to just 20 mins

The new process had strict data quality checks ensuring accuracy of the data

Adding new data sources in this new system became faster and easier

Conclusion

In today's dynamic business environments, it is imperative for organizations to adhere to regulatory and compliance requirements. From refining the raw compliance data to uncovering hidden patterns, the project helped the customer use data analytics to streamline compliance reporting. After the success of the first phase, the customer decided to start the next phase covering the remaining Oracle and SQL Server databases. In future, they plan to make data analytics at the center of all their compliance and regulatory reporting across the organizations.

Comments